Table of Content

- The System That Worked (Until It Didn't)

- How Coupling Accumulates

- What Decoupling Actually Changes

- Four Decisions That Made It Work

- What Became Possible After Decoupling

- Real Cases: Provider Lock-In in Practice

- Where Decoupling Pays Off Most

- Decoupled Monolith vs Microservices

- When to Decouple – And When Not To

- Common Mistakes

- The Architecture Is the Strategy

Is architecture holding you back?

Book a callYou don’t choose between a monolith, decoupled architecture, and microservices – you move through them in stages.

Most products start as a monolith, and that’s the right call. When integrations multiply, and providers start creating friction, you decouple.

When the team grows large enough that people are blocking each other on deployments, you move toward microservices.

The architecture that fits your product isn’t a one-time decision – it’s a response to where your business is right now, and it should change as the business does.

At some point, you may ask yourself: what makes a product impossible to scale, even when the team is strong, and the market is ready? Usually, it’s not the code quality or the roadmap. It’s an architecture that was never designed to change. Tight coupling to a single provider, integration logic buried in the core, no separation between what the business does and what a vendor’s API happens to return – these are the things that quietly turn a working system into one that can’t move.

Everything you read here is about how that happens, what decoupled architecture actually fixes, and where microservices fit into the picture.

But, before we go further, all three concepts are plainly defined:

Monolith: A single application where all logic – business, integration, data – lives and deploys together. Fast to start, harder to change as it grows.

Decoupled Architecture: A system where components – especially integrations – are separated by clear boundaries. A change in one part doesn’t break another. External providers connect through an integration layer, not directly into the core.

Microservices: An architecture where the application itself is split into independent services, each owning its domain, deploying separately, and communicating via APIs or events. Powerful at scale, operationally complex to run.

How Tight Coupling Breaks a Decoupled Architecture Before It Starts

It started, as these things usually do, with a reasonable decision under pressure.

The team needed to launch. They had one PMS vendor, one payment provider, and one set of API endpoints to worry about. Building the integration logic directly into the core made sense – fewer abstractions, faster delivery, one less thing to explain to a new engineer. The product launched. Customers signed on.

For a while, the architecture was invisible in the best possible way. Nobody talked about it because nothing was breaking.

Then the PMS vendor released a new API version. Not a dramatic change – a restructured response format, a few renamed fields, a new auth flow in a healthy system, that gets absorbed at the integration layer and moves on. But this team didn’t have an integration layer. The PMS data model had become the core data model. The vendor’s field names were scattered across the codebase. Three internal services broke simultaneously on a Tuesday morning, and the on-call engineer spent the day tracing dependencies that nobody had mapped.

This is the moment when the coupled vs decoupled architecture stops being a theoretical distinction. It becomes a practical question: how many places in this codebase know what a “reservation” looks like according to a vendor that doesn’t care about internal logic?

The answer, in this case, was: too many.

Discover about how API Integration Services help connect systems, streamline workflows, and scale architecture.

How In-Core API Integration Debt Accumulates Over Time

The team fixed the Tuesday incident. They updated the field mappings, patched the broken services, and added a note to the backlog: refactor integration layer, someday.

Someday didn’t come. It rarely does.

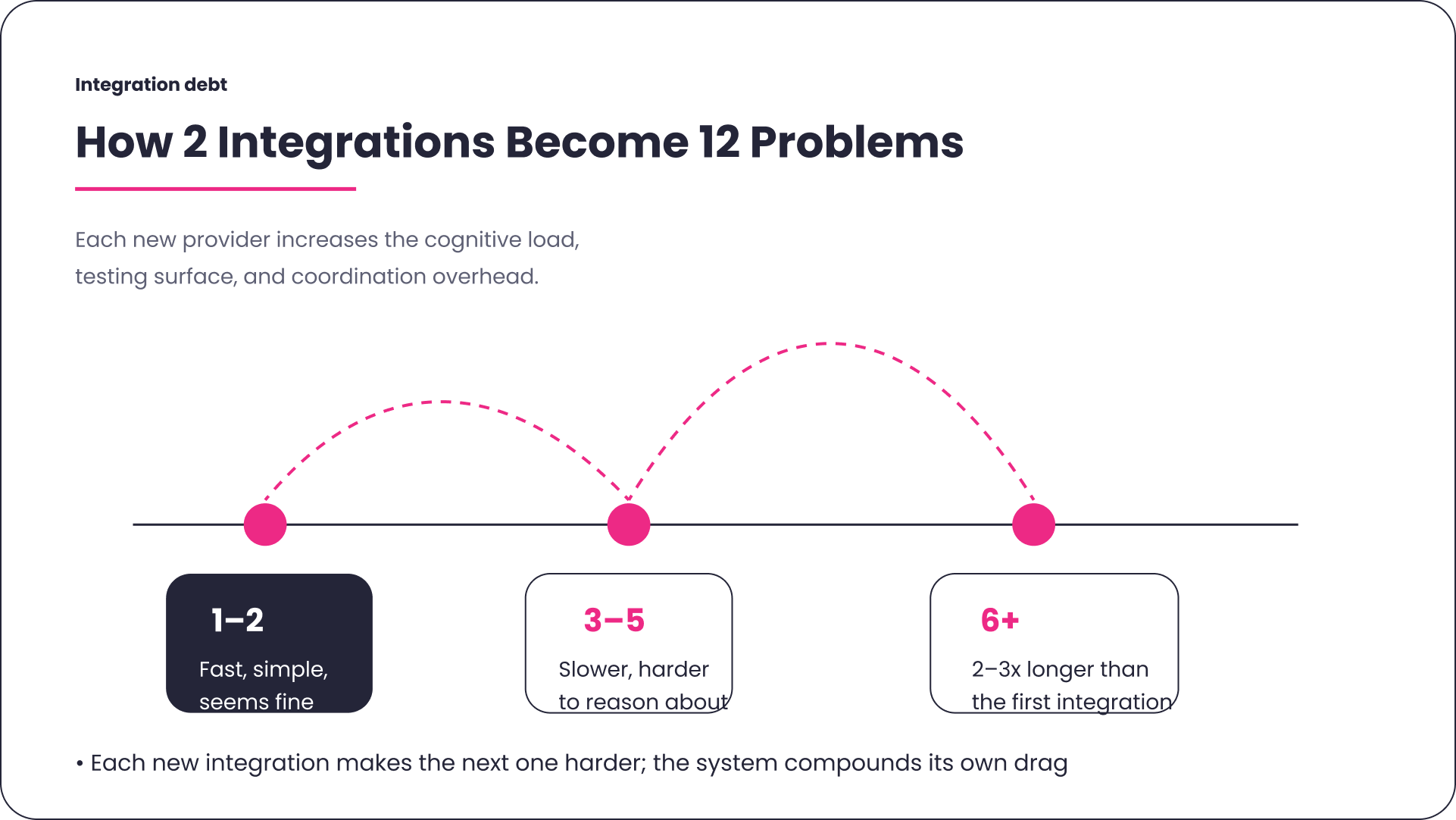

What came instead was a second integration – a channel manager, added under the same deadline pressure, built the same way. Then, a payment provider. Then a notification service. By the time the team had five integrations, adding a sixth took nearly three times as long as the first one had. Not because the sixth integration was harder, but because the codebase had become harder to reason about.

This is how coupled architecture accumulates: not through bad decisions, but through repeating a decision that was only ever meant to be temporary. The system doesn’t break – it gets heavier. Slower to change. More expensive to test. The kind of codebase where a new engineer asks “what does this function do?” and the honest answer is “it depends on which provider you’re talking to.”

The real cost shows up when the business needs to move. A new PMS partner wants to run a pilot. A payment provider is having reliability issues, and the team wants to switch. A channel manager raises prices, and someone suggests negotiating – or replacing them.

That’s when architecture stops being a technical concern and becomes a business constraint. The sales team is ready to close a deal. The engineering team is doing a different calculation. The answer they come back with is rarely the one the business wants to hear.

What Does Decoupled Software Architecture Actually Change?

The team eventually made the decision that the backlog item had been requested for two years. Not because they had a gap in the roadmap – because the cost of not making it had become impossible to ignore.

They built an integration layer. A dedicated piece of infrastructure between the core system and every external provider. The core talks to the layer; the layer talks to the vendors. What happens on either side of that boundary is no longer the other side’s problem.

The first thing they noticed was how much core logic had been written around provider-specific quirks. Field names, pagination patterns, error codes – all of it had leaked inward over time. Pulling it out was uncomfortable. But when it was done, the core finally described the business domain – reservations, availability, rates – in its own language, not in a dialect inherited from a vendor.

This is what decoupled software architecture looks like in practice. Not a diagram. A system where a breaking change from a provider lands at the integration layer, gets translated, and never reaches the core. Where a new engineer can understand what a “reservation” means in this system without first learning what the PMS thinks it means.

The decoupled system architecture they ended up with wasn’t exotic. It was a set of deliberate decisions – about where logic lives, what each layer is responsible for, and what “stable” means in the context of an interface. Those decisions, made consistently, changed what the system could absorb.

Decoupling is also the foundation that makes microservices viable later. You can’t run independent services that don’t break each other without first establishing where the boundaries are and how they communicate. Decoupling isn’t a step toward microservices – it’s the prerequisite.

What Are the Four Decisions That Made Decoupled Architecture Work?

Getting to a working decoupled architecture meant solving four specific problems that had been causing damage for years. Each fix felt almost obvious in retrospect, which is usually the sign that it was necessary.

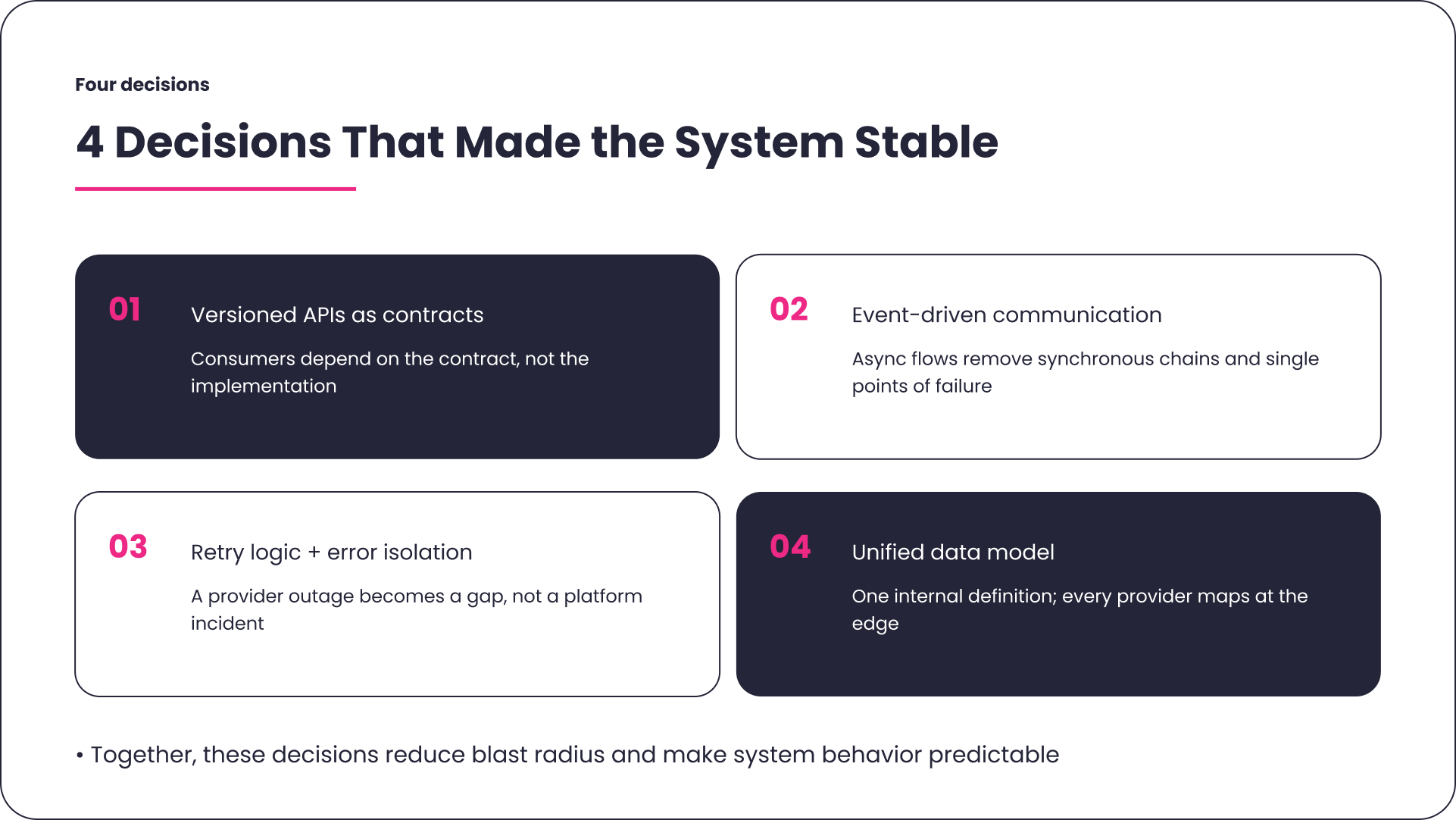

Versioned APIs as Integration Contracts

The first problem was invisible dependencies. Services called each other directly, without versioning, without stable interfaces. One team changed a response format to match a new provider’s schema. Another team’s service broke. Nobody meant for that to happen – there was just no structure to prevent it.

The fix: treat every internal interface as a contract. Versioned, explicit, stable. Internal consumers depend on the contract, not the implementation. A provider can change anything behind the interface as long as the contract holds. Following api integration best practices from the beginning would have prevented most of this, but the important thing was that it was fixable.

Event-Driven Communication Between Services

The second problem was synchronous chains. A booking confirmation called the PMS, then the payment provider, then the notification service – in sequence, each waiting for the one before it. When the PMS was slow, the whole chain was slow. When it was down, the booking failed entirely, even though payment and notifications were perfectly available.

The rewrite introduced async, event-driven flows. A booking publishes an event. The PMS sync, the payment capture, the confirmation email – each consumes that event independently, on its own schedule. The user gets a confirmation. The rest happens in the background. The system stops treating every provider as a requirement for every transaction.

Retry Logic and Integration Error Isolation

The third problem was blast radius. When a provider failed, the failure propagated. An exception from a channel manager surfaced as a user-facing error. A timeout from a rate provider degraded the search page. The on-call engineer would open a dashboard and see a platform incident – caused entirely by a third-party service the platform had no control over.

Error isolation changed the shape of failures. A provider outage became a gap in one data source, not a platform event. Retries with exponential backoff absorbed temporary outages. Circuit breakers stopped the system from hammering a struggling provider. The on-call rotation got quieter.

Unified Data Model Across Providers

The fourth problem was the one that had started everything: the codebase spoke in provider dialects. Each integration had brought its own vocabulary into the core. The fix was one internal data model – a single definition of what a reservation, a rate, and an availability window look like in this system’s terms.

Every provider maps to that model at the integration layer. Adding a new PMS means writing a mapping – not refactoring downstream logic. The core stops caring which vendor is on the other side, because the integration layer’s job is to make that irrelevant.

This is the foundation of a decoupled microservices architecture when the product eventually scales further. Each service speaks its own internal language; the integration layer handles translation. Service independence starts here – with a decision about where the vocabulary lives, enforced through versioned APIs that each service owns and controls.

What Became Possible After Decoupling

The benefits of decoupled architecture are usually described as a list. Scalability. Flexibility. Resilience. The problem with lists is that they don’t convey what it actually feels like when the architectural constraint is gone.

Here’s what it felt like for this team.

The PMS vendor came back for the annual contract renewal with a significant price increase. In previous years, this conversation had been mostly procedural – the team knew switching would cost months of engineering work. This year, the integration layer was live, and a second PMS had already been mapped to the internal model as a proof of concept. The negotiation went differently.

Independent deployments meant the payment team could ship on Tuesday without waiting for the channel manager team to finish their regression cycle. The quarterly release freeze quietly disappeared. Engineers stopped checking with each other before merging.

When a channel manager had a three-hour outage, the on-call engineer got a low-priority alert. Search results showed slightly reduced coverage. No bookings were lost. Nobody escalated. The outage was resolved, and the system caught up automatically.

These are the decoupled architecture advantages that don’t show up in architecture diagrams: the contract negotiation that goes in a different direction, the deployment that happens without a meeting, the outage that doesn’t become an incident. They are the costs the team had stopped noticing – because those costs had always been there.

Your architecture should enable growth, not block it.

Practical Side of Decoupled Architecture

This team’s story isn’t unusual. The details change – the industry, the provider type, the moment when coupling became undeniable – but the shape of the problem is consistent.

A hospitality SaaS found itself unable to onboard a major hotel chain because the chain required a different PMS than the one the platform had been built around. The integration layer they built over the following three months wasn’t just a technical project – it was what made the contract possible. Without it, the deal wouldn’t have closed.

After moving to an async model with isolated error handling, search latency dropped by around 40% – not because the providers got faster, but because the slow ones stopped blocking the fast ones.

After introducing versioned APIs at internal boundaries, each team shipped independently. [Integrating multiple APIs] without this structure is exactly how coordination overhead accumulates into something that looks, from the outside, like slow engineering – but is actually an architectural tax.

These aren’t outliers. They’re what decoupled architecture applications look like in practice – the moments where the architecture either makes something possible or makes it prohibitively expensive.

Where Decoupled System Architecture Pays Off Most

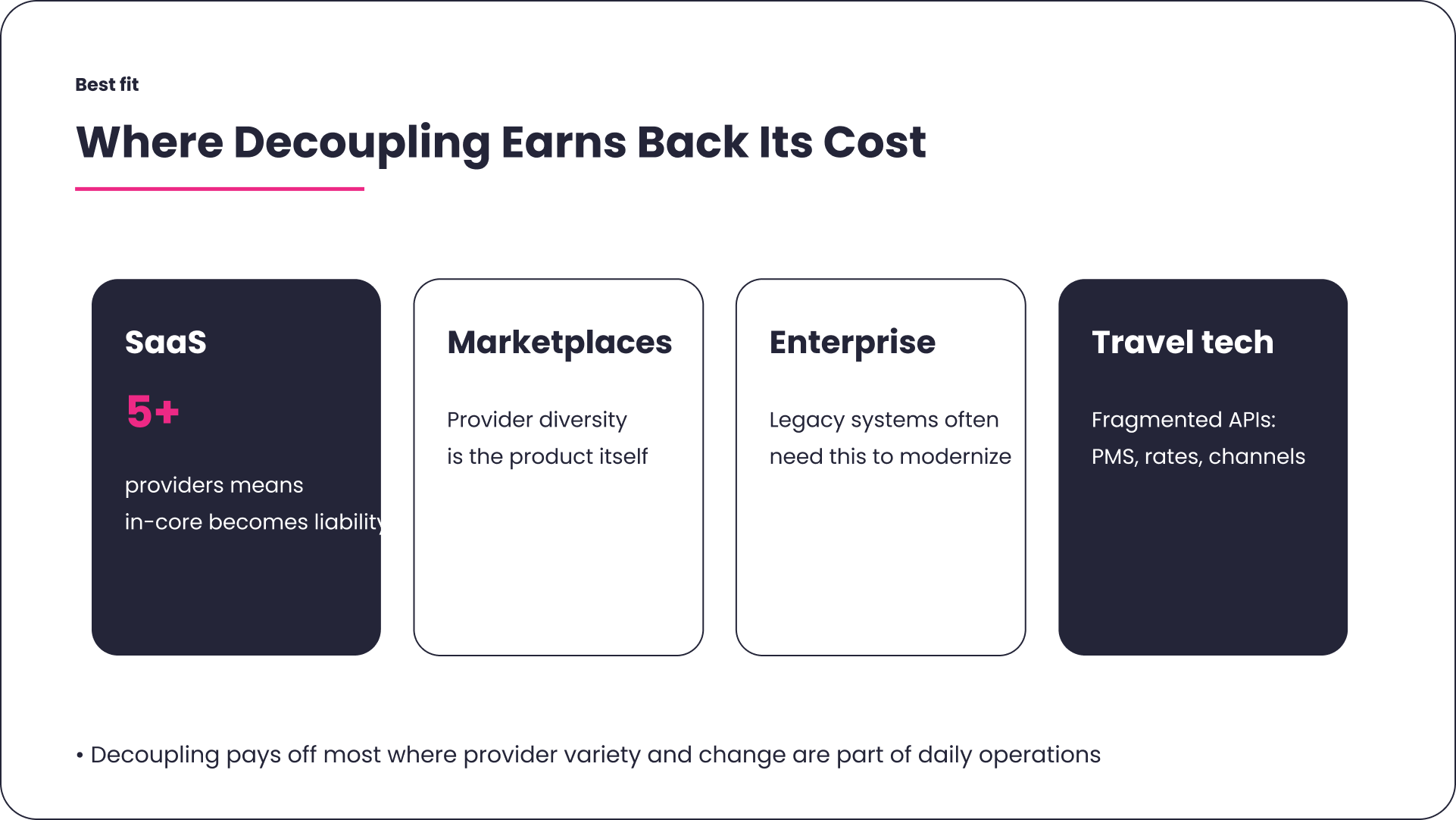

Decoupled commerce architecture and decoupled system architecture earn back their complexity cost most reliably in specific environments.

SaaS products connecting to five or more third-party providers are the clearest case. Once the integration surface reaches that width, the in-core approach stops being a simplification and starts being a liability. The question isn’t whether to decouple – it’s whether to do it now or after the first major incident.

Marketplaces where provider diversity is a product feature face this even more directly. The platform has to absorb the operational complexity of providers with different APIs, different reliability profiles, and different data models. An integration layer isn’t optional – it’s the mechanism that makes the product promise technically deliverable.

Enterprise products with legacy system dependencies face a different version of the same problem. Dependencies accumulate over the years. Decoupling is often the only modernization path that doesn’t require a full rewrite – and in enterprise environments, that almost always means introducing an abstraction layer between the core and the legacy systems before anything else can change.

Hospitality and travel tech operate in an environment where the API landscape is unusually fragmented – channel managers, booking engines, rate systems, PMS platforms, each with their own versioning cadence and their own interpretation of “availability.” Decoupled software architecture in this context isn’t a best practice. It’s the difference between a platform that can partner broadly and one that can only support the providers it was originally built for.

Is your system ready to scale? If adding a new integration takes longer each time, your architecture is already telling you something.

Should You Choose a Decoupled Monolith or Microservices Architecture?

For the team in this story, the integration layer was enough. The core remained a single deployable unit. Decoupling happened at the boundary between the core and the outside world – not inside the core itself.

For teams at a different scale, a decoupled microservices architecture is the natural extension of the same principles. Each service owns its domain, exposes a versioned API, communicates via events, and can be deployed independently. A change in the payment service doesn’t require a deployment of the reservation service. Service independence at this level means teams can move without coordinating every release.

The microservices middleware pattern that makes this operationally manageable is its own discipline – distributed tracing, service discovery, and observability. It’s worth understanding before committing to the topology.

Here’s how to think about the choice:

| Decoupled Monolith | Decoupled Microservices | |

| Deployment | Single deployable unit | Multiple independently deployable services |

| Integration logic | Integration logic separated from core via an integration layer | Each service owns its domain and data |

| Internal communication | Internal services communicate through versioned internal APIs | Communication via events or versioned APIs – no direct internal call |

| Operational complexity | Lower operational complexity – one thing to deploy, monitor, and debug | Higher operational complexity – requires observability tooling, service discovery, and distributed tracing |

| Right fit | Up to ~10–15 integrations, small-to-mid teams | Large teams, high deployment frequency, complex domains |

| Modernization path | Extract services gradually as boundaries become clear | Significant upfront investment in infrastructure and tooling |

| Entry cost | Low cost | High |

The principle matters more than the deployment model. A well-structured monolith with a proper integration layer and versioned internal interfaces is more resilient than a microservices architecture where services call each other synchronously without contracts. Decoupling is a set of decisions about where logic lives and how components communicate – not a requirement to split everything into separate services.

When Is the Right Time to Decouple Your Architecture?

This is the part where most articles tell you to start immediately. That’s not always the right advice.

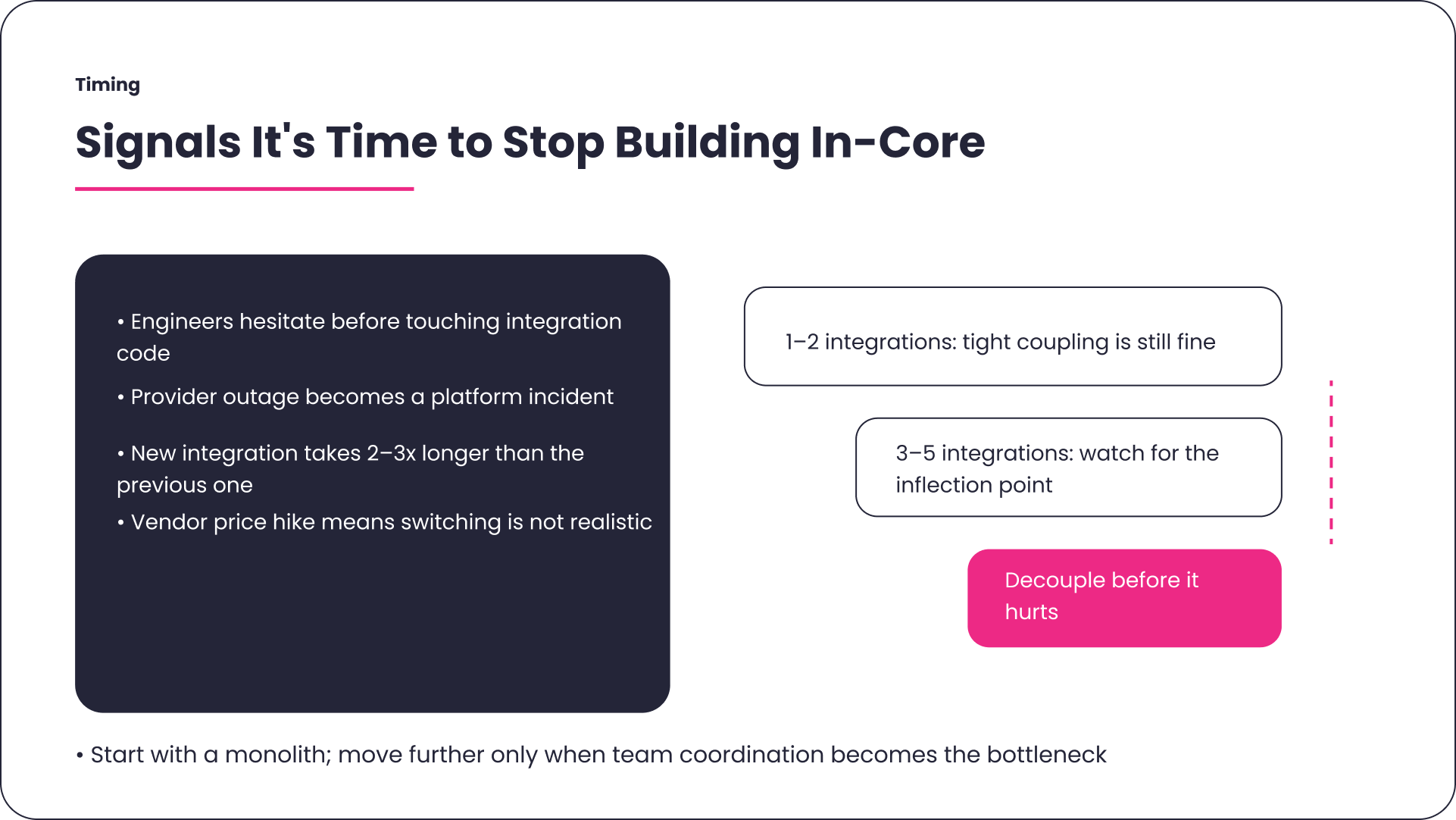

For an MVP with one or two integrations, tight coupling is a defensible choice. The cost of building a proper integration layer before you know what you’re integrating – before you know which providers will stay, which will change, which will disappear – isn’t always justified. Build fast, learn what you’re connecting to, and accept the refactoring cost later. That’s a reasonable trade.

The inflection point arrives when staying coupled costs more than decoupling. It doesn’t announce itself. After three to five integrations, the signs start appearing: engineers hesitate before touching integration code. A provider outage causes a platform incident instead of a contained degradation. A new integration takes two to three times longer than the previous one because the codebase is harder to extend without side effects. A vendor raises prices, and the business can’t seriously evaluate switching.

When those signals are present, the question of how long api integration takes changes dramatically depending on whether the work happens now, with intention, or later, under pressure.

On the monolith vs microservices question specifically: if the signals above are present but the team is small, and the deployment frequency is low, a decoupled monolith is almost always the right first step. It solves the coupling problem without adding the operational complexity of distributed systems. Microservices become relevant when team size, deployment frequency, and domain complexity make the monolith itself a coordination bottleneck – not before.

The teams that decouple early tend to do it because someone has seen what happens when you don’t.

Travel and Booking APIs: Сonnectivity Landscape

Common Integration Architecture Mistakes – And What They Cost

The team in this story made most of them.

They built everything in-core because “we only have two integrations right now.” That was true. It was also true of every team that ended up with twelve integrations and no integration layer – they all started with two.

They delayed the refactor because “we’ll do it properly once we have time.” What materialized instead was the Tuesday incident, then another one six months later, then the contract negotiation that forced the issue.

They decoupled the frontend from the backend early, which felt like progress, and was, but left integrations tightly coupled inside the core. The [API-first approach] they eventually adopted would have caught this earlier: designing integration contracts before writing the implementation, rather than extracting them after.

They skipped versioning on internal APIs because internal interfaces felt like implementation details. They discovered, when they tried to update the unified data model six months later, that “internal” doesn’t mean “consequence-free.” Internal consumers break just as reliably as external ones when the interface changes without notice.

None of these mistakes was careless. They were the natural result of building under pressure. The point of documenting them isn’t to suggest the team should have known better – it’s to give the next team a slightly earlier moment of recognition.

Decoupled Architecture Is a Strategic Decision, Not a Technical One, and ASD Team Knows How to Get It Right

Decoupled architecture isn’t a technical preference. It’s a strategic position.

A system that can swap providers, absorb breaking changes, and deploy independently isn’t just easier to maintain – it’s a different kind of business asset. The team that built it negotiates from a different position. They respond to market changes that a coupled system would turn into a six-month engineering project. They onboard a new partner without asking the whole platform to hold still.

The teams that get there don’t do it because they’re more disciplined or better resourced. They do it because they’ve felt the weight of a system that can’t change – or they’ve talked to someone who has.

ASD Team is a software development entity that helps SaaS and B2B products – particularly in hospitality, travel, and marketplace sectors – map integration debt, design decoupled architecture layers, and move from tightly coupled systems to ones that can scale without constant rewrites.

Why does a tightly coupled system become a business risk, not just a technical one?

When your core logic is built around a specific provider’s data model, switching that provider stops being a technical decision and becomes a six-month engineering project. That’s when a vendor knows they have leverage – and uses it.

What is decoupled architecture in practical terms?

A system where a breaking change from an external provider lands at the integration layer, gets translated, and never reaches the core. Your business logic stops caring which vendor is on the other side – because the integration layer’s job is to make that irrelevant.

When do I move from in-core integrations to a decoupled architecture?

When engineers hesitate before touching integration code, when a provider outage becomes a platform incident, or when a contract renewal opens with a price increase you can’t push back on – the inflection point has usually already passed. If you’re not sure whether you’re there yet, our custom api integration services can help you map where the coupling is before it becomes a crisis.

Does decoupled architecture mean adopting microservices?

No. A well-structured monolith with a proper integration layer and versioned internal interfaces is more resilient than microservices, where services call each other without contracts. The principle matters more than the deployment topology. If you’re deciding how to structure that integration layer, understanding the difference between middleware integration and building it directly into your core is worth working through before you commit.

What’s the most common mistake teams make with integrations?

Building everything in-core because “we only have two integrations right now” – and never finding the right moment to refactor, until the system has twelve integrations, three providers with breaking changes pending, and an on-call rotation that nobody wants to be on.

When does a decoupled monolith make more sense than microservices?

When the team is small, deployment frequency is low, and the main problem is provider coupling – not internal team coordination. A decoupled monolith solves the integration problem without the operational overhead of running distributed services. Move to microservices when the monolith itself becomes the bottleneck, not before.

What’s the difference between decoupling and microservices architecture?

Decoupling is about separating your core from external dependencies – providers, APIs, third-party systems. Microservices take that further by splitting the core itself into independently deployable services. You can have a fully decoupled system without microservices. You can’t have stable microservices without decoupling first.

How do I know if my current architecture is tightly coupled?

A few reliable signals: a vendor API update breaks multiple internal services, adding a new integration takes significantly longer than the previous one, engineers avoid touching integration code without a full regression cycle, or a provider outage causes a platform-wide incident rather than a contained degradation.